Diagnostic accuracy software for clinical research ROC curves with DeLong AUC comparison for up to 10 tests, sensitivity and specificity with confidence intervals, decision threshold optimisation, qualitative test evaluation, and cost-based analysis.

Evaluate whether a test can support clinical decisions

A diagnostic test is only useful if it discriminates reliably between patients with and without the condition. Before recommending a test for screening, staging, or monitoring, you need to know its sensitivity and specificity, how those change across decision thresholds, and whether it outperforms the alternatives. A test that looks adequate on AUC alone may fail at the threshold that matters clinically.

The full quantitative and qualitative evaluation in one analysis. ROC curves with DeLong AUC, head-to-head comparison of up to 10 tests, decision plots across every possible threshold, and cost-based optimisation that accounts for the clinical consequences of false positives and false negatives. For binary tests, PPA/NPA and kappa.

Test Development Medical Technologist

The Hospital For Sick Children, Toronto, Canada

What's included

Establish diagnostic accuracy with DeLong AUC for up to 10 tests

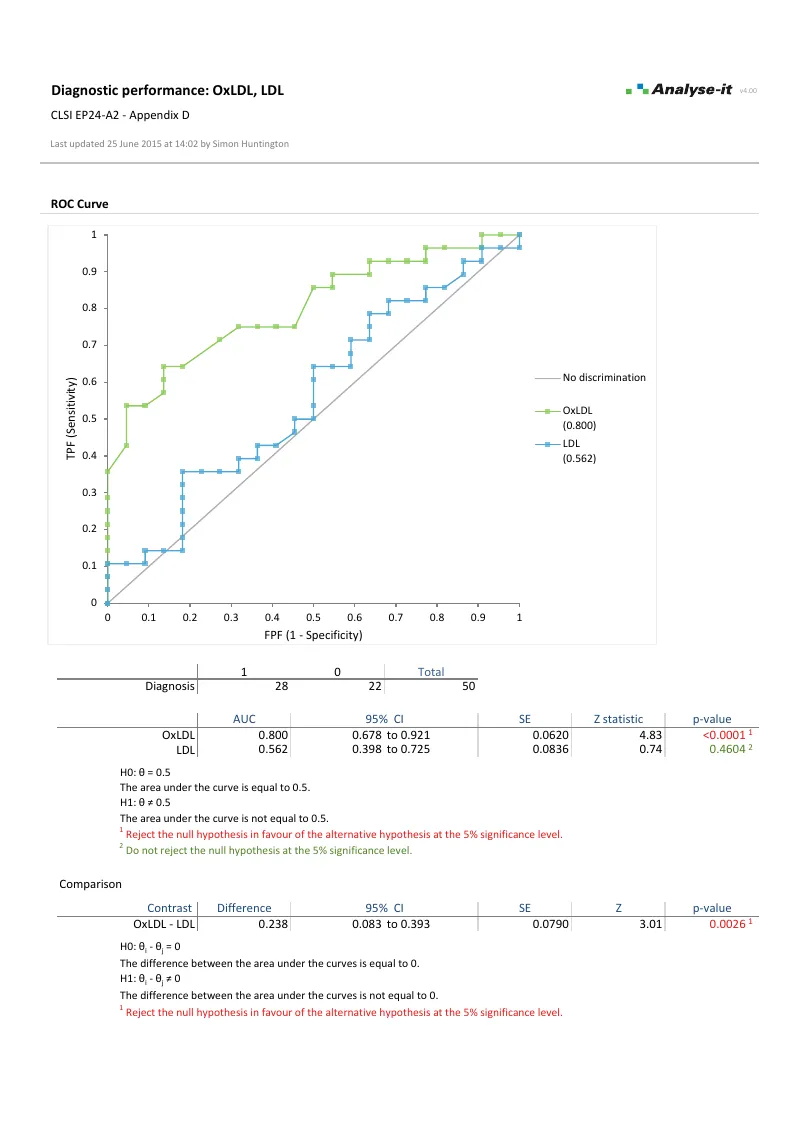

Empirical and binormal ROC curves for a single test, up to 10 paired tests, or up to 10 independent tests or groups. AUC with DeLong–DeLong–Clarke–Pearson confidence intervals and Z test against chance. Compare AUCs across tests with DeLong comparison — test for equality, equivalence, or non-inferiority.Quantify test performance with sensitivity, specificity, and likelihood ratios

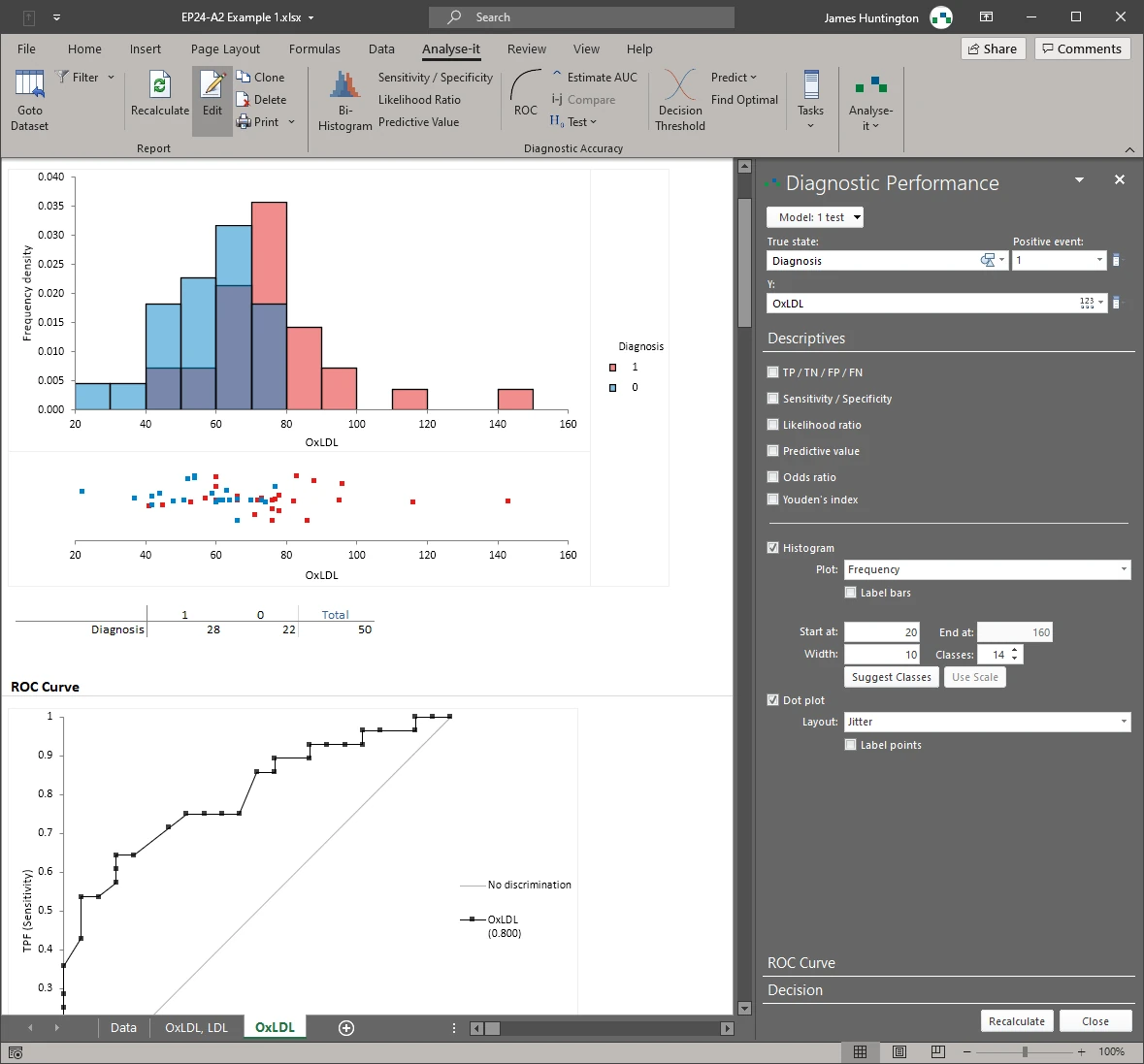

Sensitivity and specificity with confidence intervals. Positive and negative likelihood ratios. Positive and negative predictive values at any prevalence — such as 5% for population screening versus 40% in a specialist referral clinic. Diagnostic odds ratio and Youden index. Bi-histogram and dot plot to see the separation between positive and negative cases.Find the optimal decision threshold by Youden, closest-to-(0,1), or cost

Youden, closest-to-(0,1), and cost-based criteria — such as weighting a missed cancer diagnosis at 10× the cost of a false positive biopsy referral. Decision plots show sensitivity, specificity, likelihood ratios, predictive values, or cost across every possible threshold so you can see the trade-offs before you commit.Compare tests head to head with DeLong AUC comparison and partial AUC

Overlay ROC curves for multiple tests on the same plot. DeLong AUC comparison tells you whether one test is statistically superior. Partial AUC over a clinically relevant false-positive rate range focuses the comparison where it matters — when you need high specificity, a small difference in the upper-left corner of the curve can change clinical practice.Evaluate qualitative tests with PPA/NPA and kappa agreement

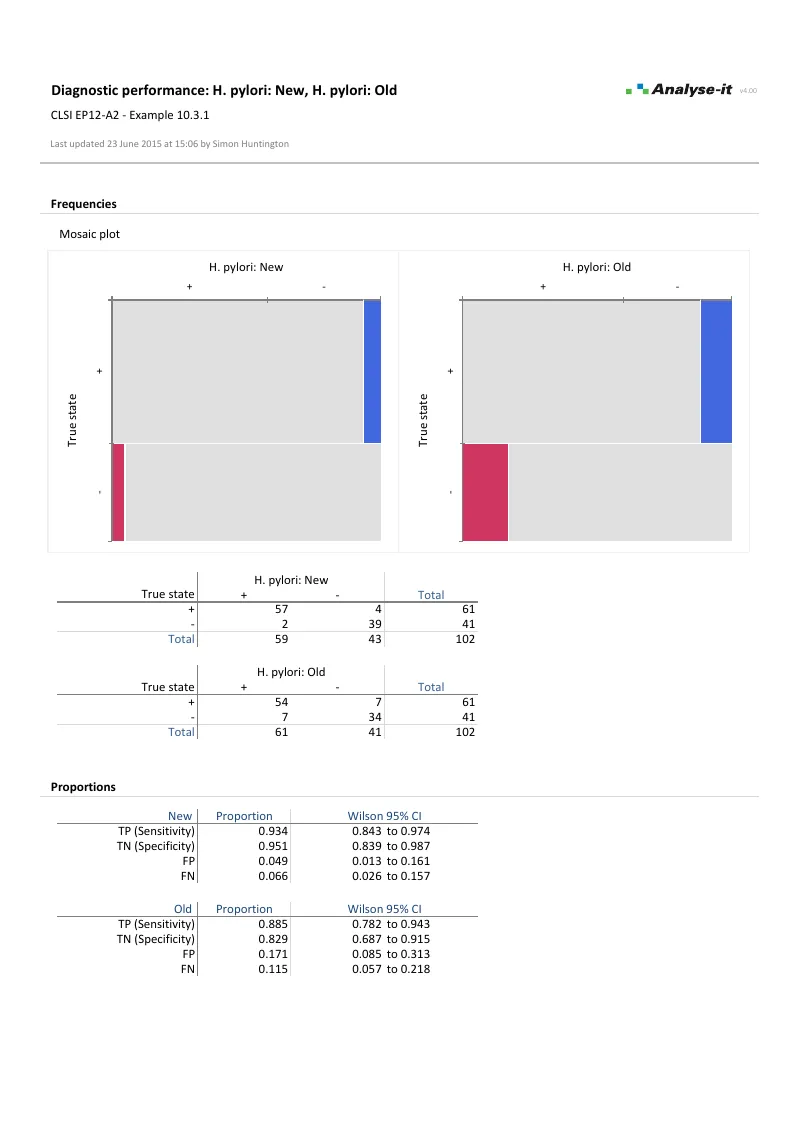

When the test result is binary — positive or negative — evaluate agreement with a reference method using proportion in positive agreement (PPA) and negative agreement (NPA). Kappa and weighted kappa for chance-corrected agreement. McNemar, Fisher exact, and score Z tests for comparing sensitivity and specificity between two paired or independent qualitative tests. Mosaic plot of outcomes.

Example analyses

See diagnostic accuracy results in detail — ROC curves, AUC comparison, decision plots, and qualitative test evaluation — using example datasets you can download and follow along with.

Part of the Medical edition

Diagnostic accuracy evaluation is one part of the Medical edition, alongside Bland–Altman agreement, reference intervals, and survival analysis — plus the full Standard edition for hypothesis testing, regression, and descriptive statistics.

For formal method validation with Passing–Bablok, Deming regression, and CLSI protocol support, see the Method Validation edition.

Software you can trust

Technical details

ROC analysis

- 1 test, up to 10 paired tests, or up to 10 independent tests/groups

- Empirical and binormal ROC curves

- Wilcoxon–Mann–Whitney AUC with DeLong–DeLong–Clarke–Pearson CI

- Z test of AUC is better than chance

- Partial AUC over specified FPR range

- Compare DeLong AUC difference — equality, equivalence, or non-inferiority

- Number of TP, TN, FP, FN

- Sensitivity, specificity with Clopper–Pearson exact or Wilson score CI

- Positive and negative likelihood ratios

- Positive and negative predictive values

- Diagnostic odds ratio and Youden index

- Optimal threshold: Youden, closest-to-(0,1), cost-based

- Predict FPF at fixed sensitivity, sensitivity at fixed FPF, or sensitivity/FPF at fixed threshold

Qualitative test evaluation

- 1 test, 2 paired tests, or 2 independent tests/groups

- Sensitivity, specificity with Clopper–Pearson exact or Wilson score CI

- Positive and negative likelihood ratios with Miettinen–Nurminen score CI

- Predictive values with Mercado–Wald logit CI

- Diagnostic odds ratio and Youden index

- Proportion in positive/negative agreement (PPA/NPA)

- Kappa and weighted kappa with Wald Z CI

- Difference between sensitivity/specificity with Newcombe or Tango score CI

- McNemar–Mosteller exact, Fisher exact, and score Z test

Plots

- ROC curve with confidence band

- Overlaid ROC curves for test comparison

- Decision plot: sensitivity/specificity, likelihood ratios, predictive values, or cost vs threshold

- Bi-histogram and dot plot of positive/negative outcomes new in v4.0

- Mosaic plot of outcomes (qualitative tests)