18-Feb-2013 Quantiles, Percentiles: Why so many ways to calculate them?

What is a sample quantile or percentile? Take the 0.25 quantile (also known as the 25th percentile, or 1st quartile) -- it defines the value (let’s call it x) for a random variable, such that the probability that a random observation of the variable is less than x is 0.25 (25% chance).

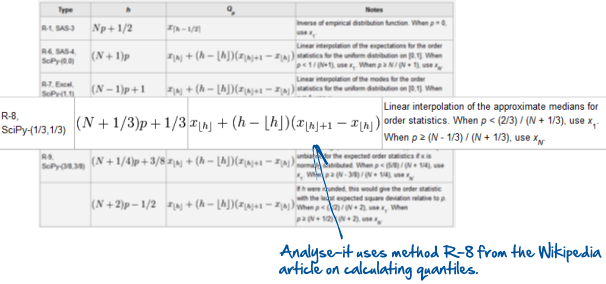

A simple question, with a simple definition? The problem is calculating quantiles. The formulas are simple enough, but a take a quick look on Wikipedia and you’ll see there are at least 9 alternative methods http://en.wikipedia.org/wiki/Quantile. Consequently, statistical packages use different formulas to calculate quantiles. And we're sometimes asked why the quantiles calculated by Analyse-it sometimes don’t agree with Excel, SAS, or R.

How are quantiles and percentiles calculated in Excel, SAS and R?

Excel uses formula R-7 (in the Wikipedia article) to calculate the QUARTILE and PERCENTILE functions. Excel 2010 introduced two new functions that use slightly different formulas, with different denominators: PERCENTILE.INC and PERCENTILE.EXC.

SAS, R and some other packages let you choose which formula is used to calculate the quantiles. While this provides some flexibility, as it lets you reproduce statistics calculated using another package, the options can be confusing. Most non-statisticians don’t know when to use one method over another. When would you use the "Linear interpolation of the empirical distribution function" versus the "Linear interpolation of the modes for the order statistics for the uniform distribution on [0,1]" method?

Why so many ways to calculate quantiles?

Many of the formulas to calculate quantiles were developed when today's computing power wasn’t available. Believe it or not some are nearly 100 years old! Now they’re merely historical curiosities, but some remain in packages like SPSS that have their roots in the 1970s.

During the development of Analyse-it we always ask: what’s the latest or best (in some sense) method we can use to calculate this statistic?

Filling Analyse-it with all the formulas invented would be easy. But that doesn’t help the average user – users that don’t have the knowledge, or can’t invest the time needed to research the most suitable method. Instead we look at published research to find the best method. If there is no single best method we implement a small set of alternatives that provide the best solution in specific situations – situations we can clearly define and explain.

Every statistical test and estimator included in Analyse-it has to pass this test to make the cut. We applied these principles when it came to quantiles.

Hyndman and Fan published a paper on calculating quantiles in 1996. It evaluated the methods used by popular statistics packages to calculate quantiles, with the intention to find a consensus on which all statistics packages could standardise. Of the 9 formulas used, 4 formulas satisfied five of the six properties desirable for a sample quantile and their derivations were deemed justified. Of those 4 formulas, Hyndman and Fan felt the "Linear interpolation of the approximate medians for order statistics" (method R-8 on the Wikipedia page) formula was best due to the approximately median-unbiased estimates of the quantiles, regardless of the distribution. Of the remaining formulas, 2 were also distribution-free but were not unbiased, and the other was approximately unbiased only for the normal distribution. They concluded that formula R-8 should be adopted as the standard across software packages.

That was 1996. Unfortunately little progress has since been made toward standardisation. Many statistical packages have a long history (even Analyse-it is over 15-years old now!) and most tend to stick to the same method to maintain backwards computability with older versions. Even R, a relative newcomer, doesn't use the recommended formula. It uses formula R-7 by default, for compatibility with S (http://stat.ethz.ch/R-manual/R-patched/library/stats/html/quantile.html). Minitab, SPSS and SAS use R-6.

Consistency between the statistics and plots.

To complicate the situation further quantiles and percentiles are also used in statistical plots. The Tukey box-plot, for example, uses the 1st and 3rd quartiles (0.25 and 0.75 quantiles) for the extent of the box element of the plot.

Frigge, Hoaglin and Iglewicz published a paper in 1989 that looked at how quantiles were calculated in 3 of the major statistical packages. They found that because each package used a different formula, each identified different observations as outliers. Confusing! They recommended statistical packages use the "Ideal or Machine Forths" formula for consistency, which is equivalent to using method R-8 to calculate quartiles.

What technique does Analyse-it use?

By now you can probably guess that we chose to use R-8 formula in Analyse-it.

Formula R-8 is recommended as the standard in both papers cited above, for descriptive statistics and plots. And using a single formula avoids the confusing situation you’ll sometimes see with other statistics packages, where the 1st and 3rd quartiles used for the box-plot differ from sample .25 and .75 quantiles.

Of course using formula R-8 can lead to some differences between the quantiles calculated by Analyse-it and other packages, though often you'll only see it with small sample sizes. If possible, as you can in R, we recommend you change the quantile calculation to use the R-8 formula. If not, you can be sure the statistics calculated by Analyse-it are absolutely correct. And you can cite this article as to why!

Further reading:

Comments

As an expert in exploratory, robust & resistant and non-parametric stats, I'm pleased to see attention being paid to this. Hoaglin was part of John Tukey's group who were major pioneers in exploratory statistics. It is part of the modern "data-driven" approach to statistics (see the book with that title by Peter Sprent). Peter Sprent's books are all worth a look. I have written several book chapters on these topics as an applied statistician.

Comments are now closed.