1-Apr-2020 Sensitivity/Specificity and The Importance of Predictive Values for a COVID-19 test

There’s currently a lot of press attention surrounding the finger-prick antibody IgG/IgM strip test to detect if a person has had COVID-19. Here in the UK companies are buying them to test their staff, and some in the media are asking why the government hasn’t made millions of tests available to find out who has had the illness and could potentially get back to work.

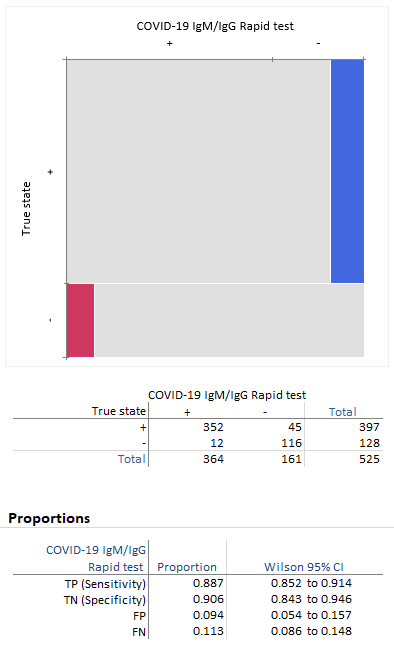

We did a quick Google search, and there are many similar-looking test kits for sale. The performance claims on some were sketchy, with some using as few as 20 samples to determine their performance claim! However, we found a webpage for a COVID-19 IgG/IgM Rapid antibody test that used a total of 525 cases, with 397 positives, 128 negatives, clinically confirmed. We have no insight as to the reliability of the claims made in the product information. The purpose of this blog post is not to promote or denigrate any test but to illustrate how to look further than headline figures.

We ran the data through the Analyse-it Method Validation Edition version 5.51. Here's the workbook containing the analysis: COVID-19 IgM-IgG Rapid Test.xlsx

Sensitivity/Specificity and Predictive values

We used Analyse-it to determine the sensitivity/specificity and confirmed the performance claims on the website. The sensitivity is listed as 88.66% and the specificity 90.63%. Some websites also make an “accuracy” claim, usually a combination of (TP+TN)/Total, but that’s a useless statistic.

So, with reasonably impressive numbers around 90%, what’s the problem?

First, we need to look at the meaning of sensitivity and specificity:

- Sensitivity measures the ability of a test to detect the condition when it is present. It is the probability that the test result is positive when the condition is present.

- Specificity measures the ability of a test to detect the absence of the condition when it is not present. It is the probability that the test result is negative when the condition is absent.

These measures give the probability of a correct test result in subjects known to be with and without a condition, respectively. To understand how a test performs in the real world, we need to look at the predictive values. They tell us how useful the test would be when applied to a population. They indicate the probability of correctly identifying a subject's condition given the test result.

Here are the definitions of positive and negative predictive value:

- Positive predictive value is the probability that a subject has the condition given a positive test result

- Negative predictive value is the probability that a subject does not have the condition given a negative test result.

The unknown!

Here’s where things get a little trickier. To calculate predictive values, the important numbers that we need to make decisions, we need to know the prevalence of COVID-19 in the population. And, at present, that’s an unknown.

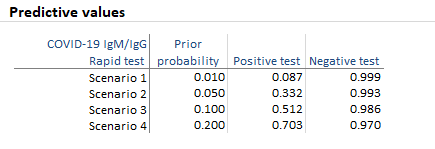

We ran the numbers in Analyse-it using four scenarios for the prevalence of the illness of 1%, 5%, 10%, 20%.

The positive predictive value, that is, the probability that someone with a positive test result from this test has had COVID-19 illness is 8.7%, 33.2%, 51.2%, and 70.3%, respectively. Whilst the negative predictive values are 99.9%, 99.3%, 98.6%, 97.0%

A practical example

To understand what’s going on here, we’ll use an example to show how the positive predictive value works.

Let’s assume you have a workforce of 100 staff to test (the population), and that the true unknown prevalence of COVID-19 illness in your workforce is only 5%. Therefore, 5 unknown staff have had the illness, and 95 have not. We apply the test to the population (all the staff) with the following results:

- 5 who have had the illness are tested, and since the test has 88.7% sensitivity, 4 people test positive.

- 95 who have not had the illness are tested, and with the false positive rate (1-specificity) of 9.4%, another 9 people test positive.

In total, 9 + 4 = 13 people tested positive as having had the illness. But, in truth, only 4 of 13 = 30% (as we see in Scenario 2 above) of those with a positive test have actually had the illness!

Conclusion

As can be seen in the example above, things aren’t as simple as they appear when you read a headline quoting a test with “90% accuracy”.

When applied to a population with a low prevalence of illness, the false positives soon overwhelm the true positives and make the test less useful. If applied to populations with a higher prevalence of the illness, such as workers who have had symptoms and self-isolated, the usefulness of the positive test result increases (see, for example, Scenario 4 in the table above).

This paper presents just one example of a single test, and others with higher sensitivity/specificity will perform better. It highlights the importance of predictive values in decision making, and in evaluating whether a particular test is really that helpful.

For more information, see our online documentation:

Measures of diagnostic accuracy

Comments

Comments are now closed.