7-Aug-2008 Testing the assumption of normality

The most used distribution in statistical analysis is the normal distribution. Sometimes called the Gaussian distribution, after Carl Friedrich Gauss, the normal distribution is the basis of much parametric statistical analysis.

Parametric statistical tests often assume the sample under test is from a population with normal distribution. By making this assumption about the data, parametric tests are more powerful than their equivalent non-parametric counterparts and can detect differences with smaller sample sizes, or detect smaller differences with the same sample size.

When to check sample distribution

It’s vital you ensure the assumptions of a parametric test are met before use.

If you’re unsure of the underlying distribution of the sample, you should check it.

Only when you know the sample under test comes from a population with normal distribution – meaning the sample will also have normal distribution – should you consider skipping the normality check.

Many variables in nature naturally follow the normal distribution, for example, biological variables such as blood pressure, serum cholesterol, height and weight. You could choose to skip the normality check these in cases, though it’s always wise to check the sample distribution.

How to check the sample distribution

You can use a statistical test and or statistical plots to check the sample distribution is normal. Analyse-it includes three statistical tests for testing normality:

- Kolmogorov-Smirnov test

An EDF-type test based on the largest vertical distance between the normal cumulative distribution function (CDF) and the sample cumulative frequency distribution (commonly called the ECDF – empirical cumulative distribution function).

It has poor power to detect non-normality compared to the tests below. D’Agostino and Stephens say the Kolmogorov-Smirnov test is now really only of historical interest. - Anderson-Darling test

An EDF-type test similar to the Kolmogorov-Smirnov test, except it uses the sum of the weighted squared vertical distances between the normal cumulative distribution function and the sample cumulative frequency distribution. More weight is applied at the tails, so the test is better able to detect non-normality in the tails of the distribution. - Shapiro-Wilk test

A regression-type test that uses the correlation of sample order statistics (the sample values arranged in ascending order) with those of a normal distribution.

It’s the most powerful normality test available and is able to detect small departures from normality.

The only limitation is it’s not suitable for very large sample sizes. Analyse-it uses the latest algorithm and supports use on samples up to 5,000 observations, but some software limits use to 2,000, or as few as 50, observations.

While normality tests are useful, they aren’t infallible.

You shouldn’t rely on a normality test to exclusively to judge normality. You should look at the Normal plot, or Frequency histogram with normal overlay, to double-check the distribution is roughly Normal. The plots will also tell you why a sample fails the normality test, for example due to skew, bimodality, or heavy tails.

Small and large samples can also cause problems for the normality tests.

With small sample sizes of 10 or fewer observations it’s unlikely the normality test will detect non-normality. If you know the population distribution is normal you should still use a parametric test, as it’s more powerful, but if you’re unsure a non-parametric alternative is usually more conservative.

Conversely, for large samples, for example 1000 observations or more, the normality test might conclude a small deviation from normality is significant. You should look at the normal QQ plot to see if the deviation from normality really is significant.

Many parametric tests, such as the t-test and ANOVA, use the mean of the sample so some non-normality can be tolerated (due to the Central Limit Theorem). How large a sample you need depends on how skewed the sample distribution is – the more skewed the data, the larger the sample size should be – so it’s not possible to give hard and fast rules. You should first check the degree of non-normality and, only after (careful!) consideration, decide if you can safely use the test.

Using Analyse-it to check normality

Analyse-it provides the normality tests, Normal Q-Q plot and Frequency histogram mentioned above. All are included on the single sample summary statistics (that’s a tongue twister!) report.

To display detailed summary statistics, plots, and the normality test for a sample:

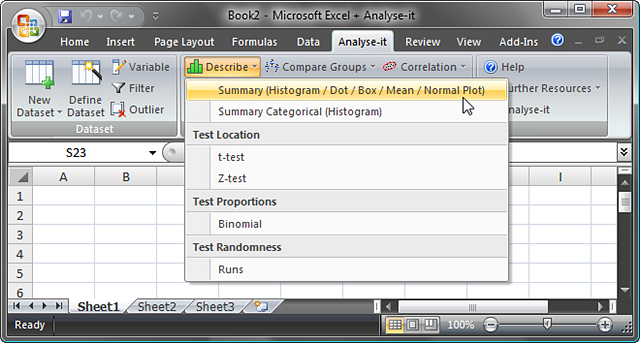

- Choose Describe > Summary from the Analyse-it toolbar.

- Select the Variable to test.

- Select the normality test to use from the Normality Test drop-down selector. When you choose a normality test, Analyse-it assumes you are checking normality and will show Normal Q-Q plot.

- Tick Overlay Normal distribution, below Histogram, to show the ideal normal distribution superimposed over the histogram bars. You can then judge the histogram bar-heights against the ideal Normal distribution.

- Click OK.

In the forthcoming Analyse-it 3.0 we’ve also made the normality tests available separately, directly from the Describe (to be renamed Distribution) menu.

How to interpret the normality test

Since the normality tests included in Analyse-it are all hypothesis tests, they test a null against alternative hypothesis. For each test, the null hypothesis states the sample has a normal distribution, against alternative hypothesis that it is non-normal.

The p-value tells you the probability of incorrectly rejecting the null hypothesis.

When it’s significant (usually when less-than 0.10 or less than 0.05) you should reject the null hypothesis and conclude the sample is not normally distributed.

When it is not significant (greater-than 0.10 or 0.05), there isn’t enough evidence to reject the null hypothesis and you can only assume the sample is normally distributed. However, as noted above, you should always double-check the distribution is normal using the Normal Q-Q plot and Frequency histogram.

On a technical note: Since we developed Analyse-it over 10 years ago, a few users have asked about the p-values calculated by Analyse-it. When calculating the p-value, Analyse-it assumes the mean and standard deviation of the population are unknown and instead estimates them from the sample. Some software packages don’t make this assumption, and go on to calculate incorrect p-values.

Comments

Depending on the shape of the distribution of the sample, you can sometimes use a transformation. A transformation is simply a function, such as log, square root, reciprocal (1/x) you apply to the observations of the variable.

Which transformation you use depends on the distribution of the sample. The transform functions above all reduce right skew -- where the right tail of the distribution is long -- to different degrees. Reciprocal exerts the most effect on right skew, then log, then square root.

If the sample cannot be transformed to be normal, and the sample size isn't sufficient that you can use a parametric test on a marginally non-normal sample, you should use a non-parametric test. Beware that even non-parametric tests have assumptions though, and shouldn't be applied with regard to the sample distribution.

Comments are now closed.